Mythbusting common AI misconceptions for financial institutions

At this point, the statement “AI is booming” is as obvious as “water is wet” or “the sky is blue.” Businesses, institutions, and individuals are embracing the newest artificial intelligence (AI) tools or applications to accomplish a variety of tasks. Artificial intelligence is incredibly expansive. It encompasses everything from deploying chatbots and streamlining administrative tasks to generating imaginative visuals and forecasting consumer trends, among other uses.

This buzz has created a race amongst many organizations to start adopting AI systems, but with varying degrees of understanding and success. As a result, the demand for AI in every industry, including banks and credit unions, has skyrocketed. From our latest Cornerstone Advisors report, 54% of community-based financial institutions (FIs) plan on investing over $100,000 in AI within the next 3 years.

As we know, the internet can blur the lines between fact and fiction— AI is no exception. Let’s explore some of the common AI misconceptions and what it means for your financial institution.

Expectation: AI is a brand new technology that’s only been used in the last few years. It’s too immature of an invention to safely adopt.

Reality: AI and machine learning models have been around since the 1950’s.

In 1950, mathematician Alan Turing created the “Turing Test” to determine if computers could be as intelligent as human beings. Ever since, the field of computer science has grown and developed programs for solving problems, ranging anywhere from playing chess to understanding human language. Over time, these techniques have evolved to use even more information and solve more complex issues, like predicting borrowers’ behavior patterns.

In recent years, we have added AI to our lives. We use it in email spam filters, online shopping suggestions, GPS apps, and voice assistants like Siri and Alexa.

Expectation: All AI is just like Chat GPT.

Reality: While ChatGPT is a popular AI tool, not all AI technology works the same way.

ChatGPT is a generative AI tool that sources large amounts of data to create human-like responses. It uses a large language model (LLM) to learn relationships between words and their meanings. Generative AI models can be wide-ranging, like ChatGPT, which gathers data from all over the internet. They can also be trained to focus on one specific area. For example, this app helps detect skin cancer.

When using specific data sources from your organization, generative AI can help with anything from customer service to strategic guidance. It can access and organize multiple data sources without having to manually build dashboards or reports, providing insights in a clean, seamless manner.

While generative AI is useful, it’s only one type of AI tool. On the other hand, machine learning models focus on identifying patterns in data and making accurate predictions. For instance, it can more accurately predict credit risk by diving deep into a credit report and finding more correlations and patterns between thousands of variables to determine the likelihood of default. It can also detect fraud, flagging things like misreported income or identity theft using sophisticated algorithms.

Many types of AI-powered technologies exist. For lenders, the two most common are:

- Machine learning (ML): Used for things like credit underwriting and fraud detection. Machine learning uses algorithms to more accurately predict specific outcomes, such as how risky an applicant is. These AI models are “locked” and do not learn on their own, allowing for all decisions and data to be transparent and consistent.

- Generative AI (GenAI): As described above, generative AI can create new content from various data sources, versus prescribed outputs with ML. It keeps “learning” based on new inputs, and can be used for chatbots and intelligence reporting. GenAI can create a lot of efficiencies, but it is not created to make decisions on its own. It still requires human oversight.

Expectation: Implementing AI in your organization will lead to a robot takeover.

Reality: Integrating AI strategically with the right partners will strengthen your organization.

Ok, maybe “robot takeover” was a bit dramatic. Regardless, several industries (not just financial services) have a lot of fear surrounding how AI will impact their jobs. In the financial services industry, AI models can greatly reduce the time spent on administrative tasks. This allows underwriters and credit analysts to focus on more complex issues.

For example, using AI for automated underwriting can help credit unions and banks automate up to 80% of consumer credit decisions. That still leaves 20% of applications that will require a human touch. Usually, these applications are from borrowers who need more time and support.

Instead of manually processing applications that machine learning can handle, underwriters can focus on loans needing human review. They can have more meaningful conversations and provide advice and support to help their customers succeed.

For analysts and leaders, using generative AI tools is a game-changer. These tools can quickly synthesize large amounts of data, leading to better and more informed decisions. Experts will still need to interpret the information and make decisions based on it, but they can do this faster and more frequently.

Expectation: AI can get you in trouble with regulators.

Reality: AI can allow you to be more compliant and less biased.

It is true that not all AI algorithms are created equal, and some can exacerbate biased decisioning. This poses a risk for lenders, who need reliable credit decisioning models that will give everyone a fair shot.

That’s why it’s critical that AI decisioning models be fully transparent, documented, and undergo thorough fair lending tests. This includes a search for the least discriminatory model, or the model that is proven to mitigate disparate impact for protected class borrowers.

When these models are properly tested to lessen disparity, they can increase approvals and improve lending outcomes for protected classes without increasing risk. For example, Verity Credit Union has been able to increase approvals by 271% for individuals aged 62+, 177% for Black Americans, 375% for Asian Pacific Islanders, 194% for women, and 158% for Latinos through the use of AI-automated underwriting.

Expectation: AI is unstable and untrustworthy.

Reality: The right technology partners will “lock” their AI.

“Locked AI” refers to machine learning models that are trained on specific data sets and do not learn on their own. This allows outputs (like lending decisions) to be more easily monitored and produce consistent results. In contrast, an unlocked model poses risks because its results can’t be easily explained or monitored. It’s constantly adding and reacting to new variables, obscuring your ability to see how accurate its lending decisions are.

Machine learning models that are locked and supervised—meaning that they are fully explainable and will not become a “black box” of predictions—will be able to produce robust documentation that can be used to satisfy examiners.

It’s critical that lenders have robust documentation for their AI models, which will include the data used, fairness testing, and all details necessary for a compliant, transparent model. Locked AI models will produce consistent, accurate results that can be thoroughly documented.

Even when using a generative AI tool, it should still be trained on a specific data set and given a specific use case. Generative AI should never make credit decisions for you, but it can help you access and organize information that will help formulate your lending strategy.

Expectation: AI is just for big financial institutions.

Reality: AI is accessible to all types of institutions, no matter how big or small.

It’s no secret that big banks are using AI. However, credit unions and community banks of all sizes have been pioneering the use of AI technology for years. AI gives community-based lenders a more level playing field against larger institutions. Not only do they have the same, powerful machine learning technology at their fingertips, but they also have a local touch that makes for a more personalized experience. Tailored AI models add value to lenders serving niche markets, including specific regions, communities, or professions.

Credit unions that process only around 100 loan applications a year, and those under $2M in assets, have been using the same AI technology for underwriting as those processing 100s of thousands of applications, and far larger than $1B in assets.

Expectation: AI is magic and spells.

Reality: It’s just math.

At Zest AI, we like to say, “the key is more data and better math.” AI can do incredible things, but it can be even stronger when used with intention and understanding.

When you look under the hood of any technology (especially powerful AI models), you’ll discover human beings behind the scenes. Together, they figure out the best way to tackle an issue. Whether it’s programming a computer to play checkers or developing a more accurate way to assess credit risk, it’s the diverse perspectives and expertise of people that drive innovation.

High-performance machines require high-caliber data scientists. The success isn’t due to a mysterious algorithm or a black box; it’s the result of a determined, problem-solving team. While AI may seem like magic, it’s the thoughtful application of data science and human ingenuity that truly brings its potential to life.

________________________________________________

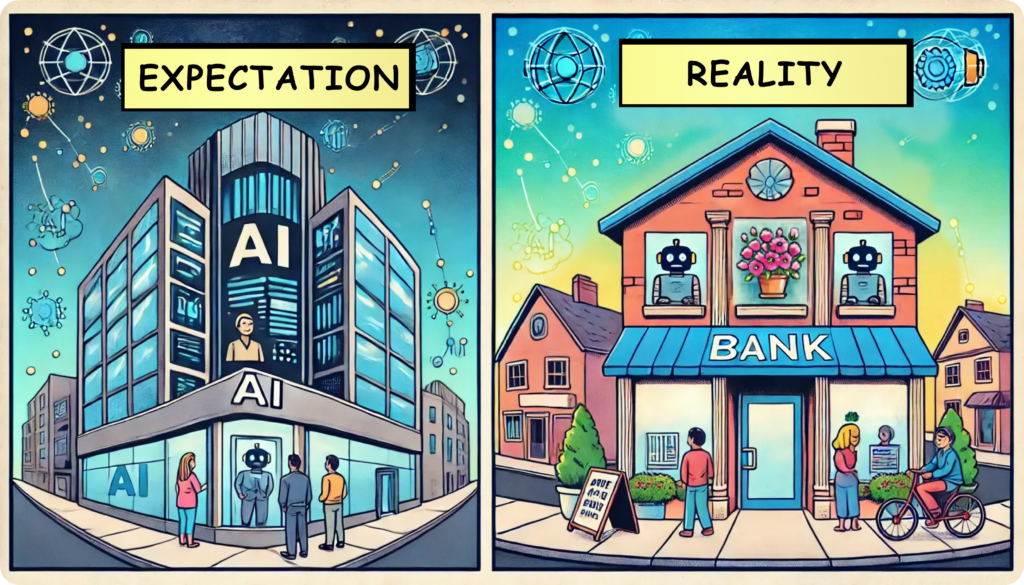

Regarding the artwork…

Expectation: We spent hundreds of hours drawing these illustrative comics.

Reality: AI generated these crazy images!

Hope you found them as fun as we did!